The Differences Between ETL and ELT

For quite some time now, extract, transform, load (ETL) has been the de-facto standard for data warehousing and analytics. More recently, however, another approach, extract, load, transform (ELT), has gained momentum, and takes upon a different and more modern style when enacting data ingestion.

But that doesn’t mean that ELT is going to completely replace ETL tools anytime soon, either. There is still a need for ETL in the enterprise, and the companies who find a way to utilize both in an effective way are the ones able to realize the distinct capabilities each brings. Data integration challenges are pervasive, and companies should look to these two data integration and ingestion patterns to address them.

What is ETL?

Extract, transform, load (ETL) in a nutshell refers to the process of moving data from a source system into a data warehouse. Prior to loading into the warehouse, the data is transformed from a raw state into the format required by the enterprise data warehouse.

The process is composed of three distinct steps, extract, transform, and load:

Extract: Retrieves raw data from an unstructured data pool and migrates it into a temporary, staging data repository

Transform: Structures and converts the data to match the correct target source

Load: Loads the structured data into a data warehouse so it can be properly analyzed and used

Each step is performed sequentially. However, the exact nature of each step – which format is required for the target database – depends on the enterprise’s specific needs and requirements.

Extraction can involve copying data to tables quickly to minimize the time spent querying the source system. In the transformation step, the data is most usually stored in one set of staging tables as part of the process. Finally, a secondary transformation step might place data in tables that are copies of the warehouse tables, which eases loading.

Each ETL stage requires interaction by data engineers and developers to deal with capacity limitations of traditional data warehouses.

What is ELT?

While ETL processes have been the dominant force for data warehousing and analytics, the other method that has grown in popularity is extract, load, and transform (ELT).

Extract: Raw data is accessed from a structured, semi-structured, or unstructured source

Load: The data in its original source state, is loaded into a data lake for downstream analytics or provisioning

Transform: Data is provisioned and transformed to meet the target requirements of an enterprise data warehouse or analytics application. However, the raw data is preserved in the data lake

Instead of a form of data transformation that takes place before the data is actually written, ELT allows any required transformation to take place after loading into the target system. One advantage in this method is the preservation of data in its raw or source state. In particular, companies that are exploring big data require continual access to data in its original state over data quality that has been manipulated to fit a specific database. This helps ensure data veracity and potentially raises the value of the information for data science applications.

ELT is commonly used in scenarios with high-end data engines, specifically a data appliance, like a Hadoop cluster, or even a cloud migration or installation where the original state of the data is maintained. ELT essentially takes a set of raw data and transfers it to a data lake on a target server before proceeding to prepare the information for downstream applications.

Economically speaking, if an enterprise’s technology is licensed by the amount of computing power, ETL traditionally makes more sense. But when database technology is licensed by storage, then ELT is a preferable option since ETL does not scale linearly.

What is the Difference Between ETL and ELT?

A simple way to look at the fundamental differences between ETL and ELT is that ELT is a more modern way to conduct ETL. While the ELT model is constantly evolving and changing how it’s conducted, that also means that the frameworks and tools that are available are not fully developed. Therefore, it is important to identify and implement the proper tools to accurately handle the load and processing of large amounts of data.

Many have found that ELT is more efficient than ETL for development code and in a lot of cases, has been referred to as much more flexible than ETL. The flexibility that arises from ELT is due to the fact that its users can run new transformations, as well as test and enhance different queries. Conversely, if ETL had been used, it would have been a bit more complex and taken longer than ELT.

Additionally, as mentioned above, ELT makes more sense when you are dealing with a data lake architecture that enables big data use cases. Major databases, such as Amazon Redshift and Google BigQuery were designed and optimized to work for ELT.

Data Warehouses vs. Data Lakes

Much like ELT is a new way to approach ETL, data lakes are a new way to approach big data. A data lake is a storage repository which can hold a substantial amount of data in its native format until it’s needed for use at a later time. What makes a data lake different from a data warehouse is that a data warehouse stores data in files or folders, while a data lake uses a flat architecture to store data.

Data warehouses go hand-in-hand with ETL, and are often referred to in the same breath. Data warehouses also support incremental or partial ETL, which means an enterprise can load or reload pieces of data warehouse if the unthinkable happens and an error occurs. Data warehouses are refreshed usually on a set schedule when users won’t access the data, late at night or very early in the morning.

Data lakes utilize the fundamental use cases for a data warehouse and expand upon them. When provisioning data to an enterprise data warehouse or analytics application, with a data lake a user can determine:

- The specific data types and sources they need

- How much of the data they actually need to use

- When they will need to use the data

- Which analytics services they will need to derive.

Comparatively, data warehouses don’t readily allow users that level of flexibility.

When to Use ETL vs. ELT

As innocuous as the switching of letters across two acronyms might seem at first, it’s undeniable that the architectural implications are far-reaching for the organization. Further, ETL and ETL data integration patterns offer distinct capabilities that address differentiated use cases for the enterprise. While ETL is the traditional choice, ELT is more scalable, allows for the preservation of data in the raw state, and provides greater flexibility of use case for data over time.

At the end of the day, each enterprise is going to have its own set of needs and processes that it must meet in order to succeed. The question of whether to use ETL or ELT is not, in fact, so black-and-white. ELT makes a lot of sense when a company needs to handle large amounts of data, while ETL is perfectly acceptable for smaller jobs. There will continue to be a need for both going forward.

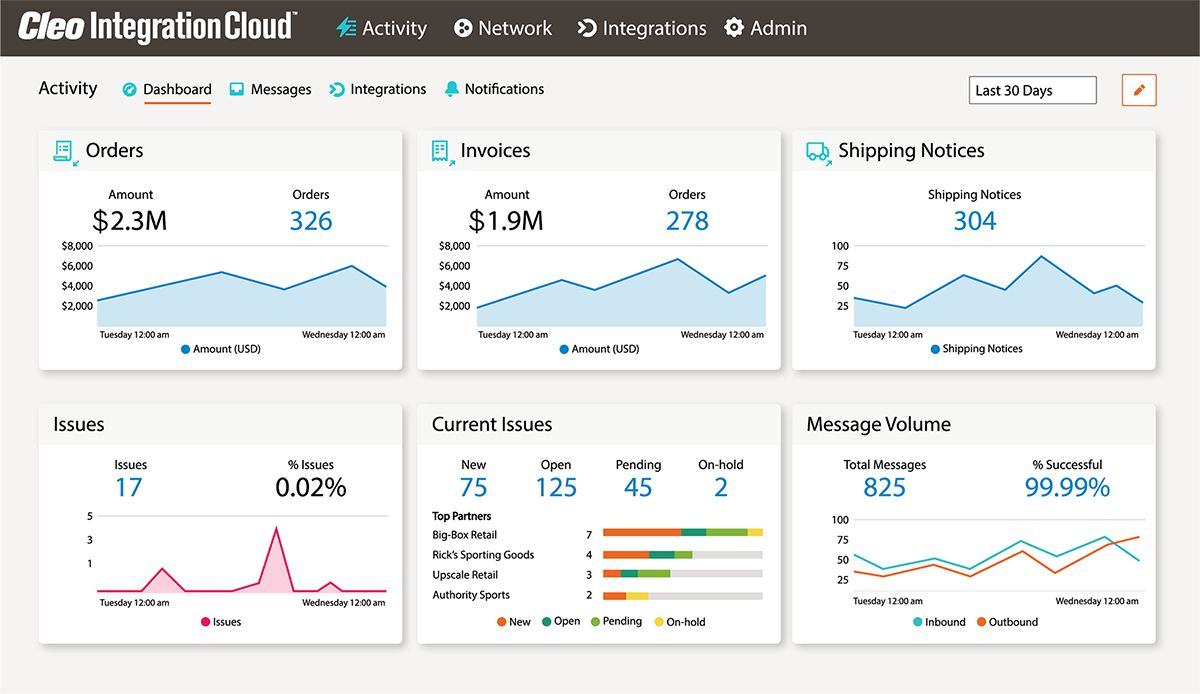

About Cleo